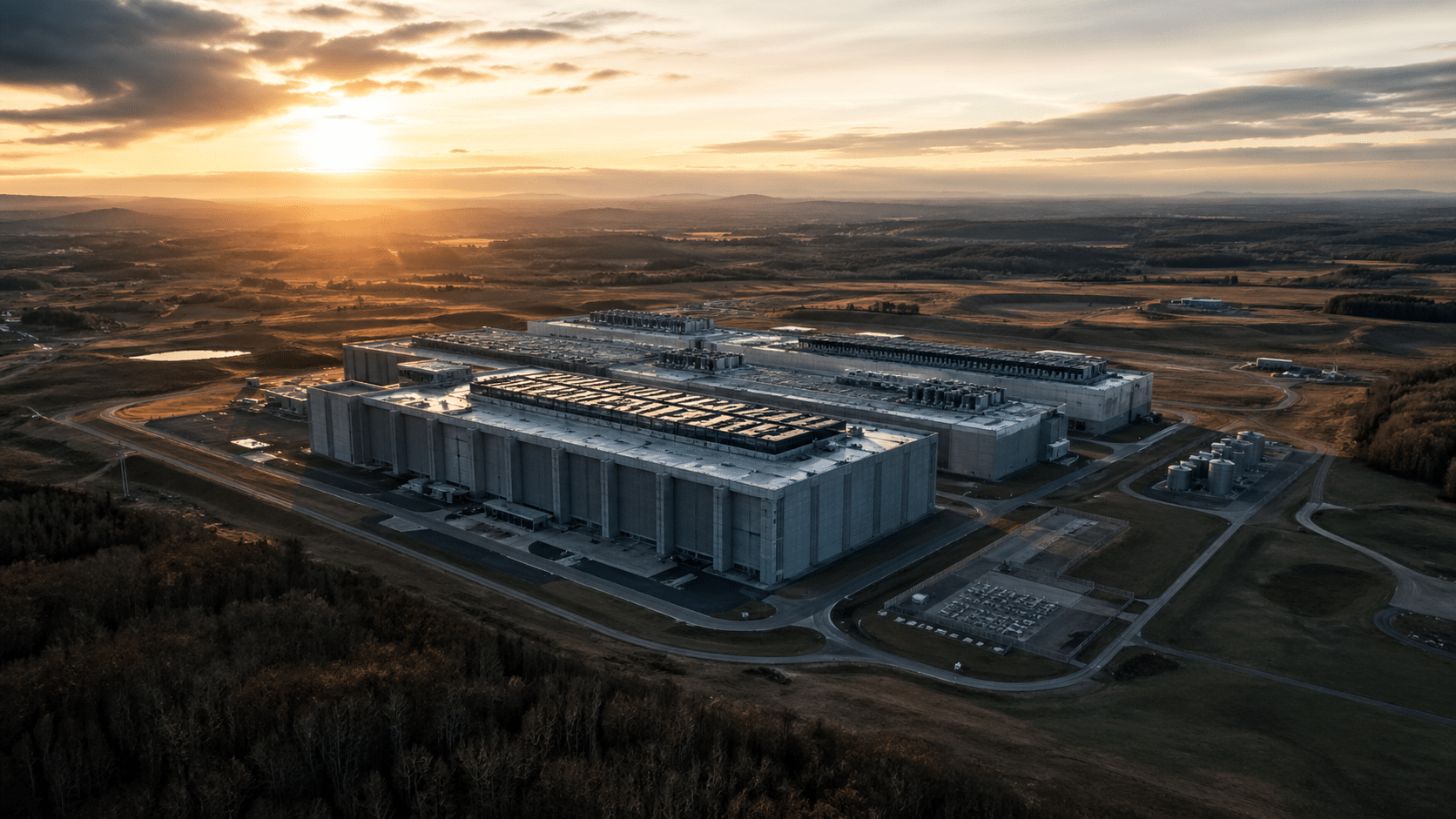

AI data centers are the new industrial monuments of our time. They’re massive, expensive, power-hungry, and often planted in places that never asked for them. From the outside, they look like quiet concrete warehouses. Inside, they’re closer to a high-security power plant that just happens to run math at insane speeds.

And here’s the twist: technology has a habit of shrinking. What looks “required” today can look wildly oversized a few years from now. If AI hardware and software become dramatically more efficient — meaning we can get the same output from a fraction of the space — then we’re left with a real question nobody likes to ask while the boom is booming:

What do we do with all these giant data centers if we don’t need them like we thought we would?

Why AI Data Centers Are So Massive

They’re Not Just “Server Rooms”

A modern AI data center is an ecosystem, not a closet full of computers. The facility houses compute halls, networking infrastructure, physical security layers, and full-time operations — all working together to keep the AI workloads running without interruption. Most of the square footage isn’t directly “doing AI” at all. It’s the surrounding infrastructure — the support systems, redundancies, and utilities — that keep the actual compute stable, cooled, and online around the clock.

What’s Actually Inside

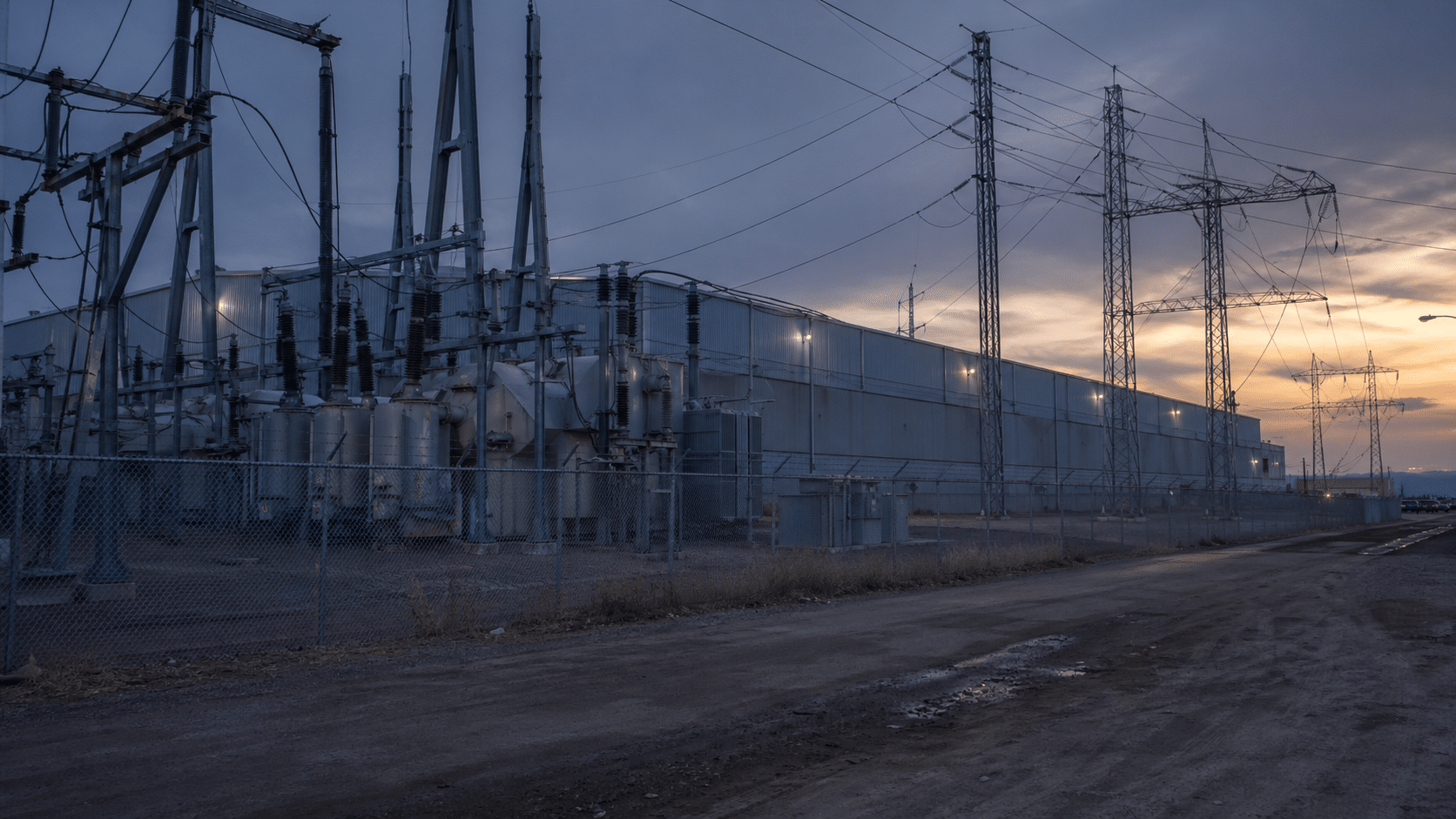

Walk through one of these facilities and you’ll find far more than racks of servers. The compute halls hold the GPU-dense servers that do the heavy lifting, but they’re surrounded by a network backbone of fiber, high-capacity switches, and redundant pathways that ensure data moves without bottlenecks. The power chain alone — transformers, switchgear, UPS systems, and diesel backup generators — takes up significant physical space. Cooling systems, whether chillers, heat exchangers, or cooling towers, occupy entire wings. Layer in controlled entry zones, monitoring centres, NOC and SOC operations rooms, staging bays, and maintenance space, and you begin to understand why these buildings span hundreds of thousands of square feet.

Redundancy Is the Hidden Reason They Sprawl

The real driver of data center size isn’t compute — it’s uptime. When a facility is designed to guarantee 99.999% availability, “two of everything” stops being a luxury and becomes an engineering requirement. Backup power for the backup power. Redundant cooling circuits. Failover network paths. Every layer of redundancy adds physical footprint, mechanical complexity, and space that sits idle unless something goes wrong. The building is designed for failure without downtime, which means the extra space isn’t waste — it’s insurance, written in concrete and steel.

Why AI Data Centers Need So Much Power

AI Is Compute-Dense by Nature

Training and running large AI models requires sustained, high-performance computation with no tolerance for power instability. Modern GPU racks draw enormous wattage — far beyond what a traditional enterprise data centre handles — and the facility must deliver that power cleanly and consistently, 24 hours a day. A single high-density AI rack can draw 40 to 100 kilowatts or more, compared to five to ten kilowatts for a standard enterprise rack. Scale that across thousands of racks and the numbers become staggering.

It’s Not Just Consumption — It’s Overhead

The electricity that actually runs the servers is only part of the story. Power conversion introduces losses at every stage. UPS systems buffer against grid fluctuations and add their own draw. And critically, cooling the facility consumes enormous energy in its own right — the hotter the racks run, the more power must be spent moving that heat out of the building. In a fully loaded AI facility, power used for cooling and overhead infrastructure can approach or exceed the power used by the compute itself.

The Grid Problem That Shows Up Later

Permitting a data center moves faster than upgrading a region’s electrical grid. A facility can be approved, built, and operational long before the surrounding transmission infrastructure catches up to the actual load. This is where community friction grows. Local substations get stressed. Rates for nearby residents shift. Grid capacity that might have supported future residential or industrial growth gets absorbed. The benefits feel abstract and distant while the infrastructure pressure is immediate and local.

Why AI Data Centers Use So Much Water

Cooling Is the Real Story

Servers don’t idle. They run constantly at high load, generating heat relentlessly. That heat has to go somewhere, and managing it is one of the most complex engineering challenges in the entire facility. The cooling approach a data center chooses has massive implications — not just for energy efficiency, but for water consumption and the relationship with the surrounding community.

The Common Cooling Trade-Offs

Air cooling draws less water but typically demands more electricity and larger mechanical equipment. Evaporative cooling — which works by evaporating water to shed heat — can reduce energy use significantly, but it consumes meaningful volumes of water, particularly during hot weather when cooling demand peaks. Liquid cooling, which circulates coolant directly to high-heat components, is growing rapidly for AI-dense racks and can reduce some bottlenecks, but adds mechanical complexity and its own infrastructure requirements. There is no neutral choice — every method involves a trade-off between water, energy, and complexity.

Why This Sparks Local Backlash

Water is local in a way that electricity isn’t. It’s tied to agriculture, to drought years, to what communities have watched diminish over decades. Even when a data center’s water use is technically within permitted limits, the optics are damaging in water-stressed regions. A facility consuming millions of gallons per year while local farmers face restrictions isn’t an abstraction — it’s a land-use conflict with a very visible address. This is one of the fastest-growing points of friction between data center developers and the communities being asked to host them.

Why AI Data Centers Get Built in Remote Counties

The Economics Are Simple

Large parcels of land are cheap. Zoning in rural counties is often easier to navigate. Tax incentive programmes are more aggressively offered in areas competing for economic development. And critically, proximity to high-voltage transmission lines and substations matters far more than proximity to population centres. The people who will use the AI never need to be anywhere near the building. The building just needs power, land, and a road.

Why Those Counties Often Don’t Want Them

The promise is typically jobs and tax revenue. The reality is frequently more complicated. Construction employment is significant but temporary. Permanent operational headcount is often lean — a large facility may employ a few dozen full-time staff. The building is physically present, consuming land, water, and grid capacity, while the economic value it generates flows predominantly to shareholders and hyperscale clients who may be headquartered thousands of miles away. The trade can feel deeply unequal when the dust settles.

What Happens If AI Tech Shrinks the Need for Mega Facilities

The Realistic Ways AI Data Centers Could Need Less Footprint

The history of computing is a history of miniaturisation and efficiency gains. There is no particular reason to believe AI will be different. Better chips deliver higher performance per watt with each generation. Better model architectures reduce the raw compute required to achieve equivalent outputs. Smarter inference techniques — including smaller models with intelligent routing — reduce the burden on centralised infrastructure. Advances in cooling allow denser racks in smaller buildings. And the growth of edge AI, where processing happens closer to the end user rather than in a centralised facility, could meaningfully reduce the centralised load for large categories of applications.

Overbuilding Becomes a Real Risk

If any combination of these efficiency gains arrives faster than expected, the capacity built during the boom becomes stranded. The facilities exist. The land is used. The grid commitments are made. But the demand that justified all of it has changed shape. Counties could find themselves holding giant, specialised buildings that aren’t easy to repurpose and weren’t designed with any alternative use in mind. This is the structural tension that nobody wants to name during a boom cycle — but it’s exactly the tension that has played out with shopping malls, coal plants, and big-box retail before it.

10 Smart Ways to Repurpose Former AI Data Centers

So what does a sensible plan B actually look like? Here are ten repurposing paths that match the physical realities of these buildings with genuine market demand.

1.Indoor Sports Megaplex

The sheer scale of a data center floor plate — often 100,000 square feet or more of uninterrupted space — makes it a natural candidate for a regional sports destination. Volleyball courts, basketball, pickleball, futsal, and training facilities can all coexist under one roof. Add tournament infrastructure, spectator areas, and a food and beverage programme and you have a facility that draws from a wide regional catchment. For counties that gained a giant building but not much economic activity, a sports complex creates ongoing foot traffic, local employment, and community identity in a way that a server farm never could.

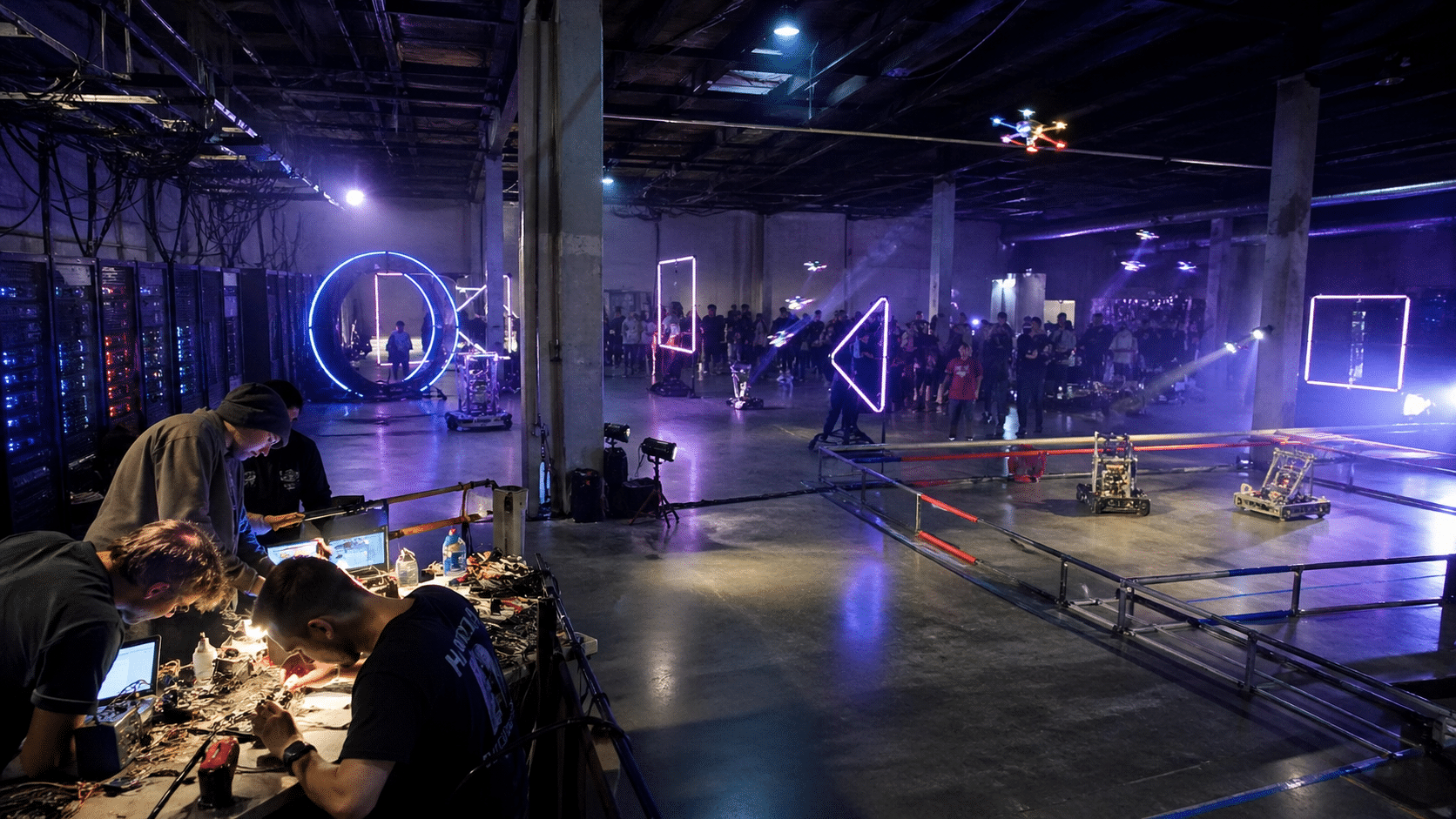

2. Drone Racing and Robotics Arena. The industrial aesthetic of a data center isn’t a limitation here — it’s the point. High ceilings, open spans, and durable surfaces are exactly what FPV drone racing, autonomous robotics competitions, and STEM programming need. Obstacle courses can be constructed and reconfigured. Repair bays and fabrication areas fit naturally into the operational support spaces already built into the facility. Local schools and universities gain a venue for robotics leagues and engineering challenges. The building stops being a liability and becomes an anchor for the next generation of technical talent in the region.

3. Disaster Response and Logistics Hub. A hardened building with massive power capacity, strong physical security, and large floor plates is well-suited for emergency management purposes. Converted as a regional disaster response hub, it becomes a staging facility for supplies, communications infrastructure, emergency personnel, and relief operations. The robust backup power systems — already in place for uptime requirements — make it reliable precisely when the grid is most stressed. For FEMA, state emergency management agencies, or regional coalitions, a pre-positioned facility like this has real strategic value that doesn’t depend on normal economic conditions.

4. Vertical Farming and Controlled Agriculture. The environmental control systems inside a data center — designed to maintain precise temperature and humidity for sensitive electronics — translate surprisingly well to controlled environment agriculture. Vertical farming operations growing leafy greens, herbs, medicinal plants, or specialty crops require the same consistency. Lighting retrofits and hydroponic or aeroponic systems can be installed in the existing shell. The electrical capacity is already there. For regions where the data center consumed local water and generated little local employment, a farming operation that produces food for regional distribution offers a more tangible community benefit.

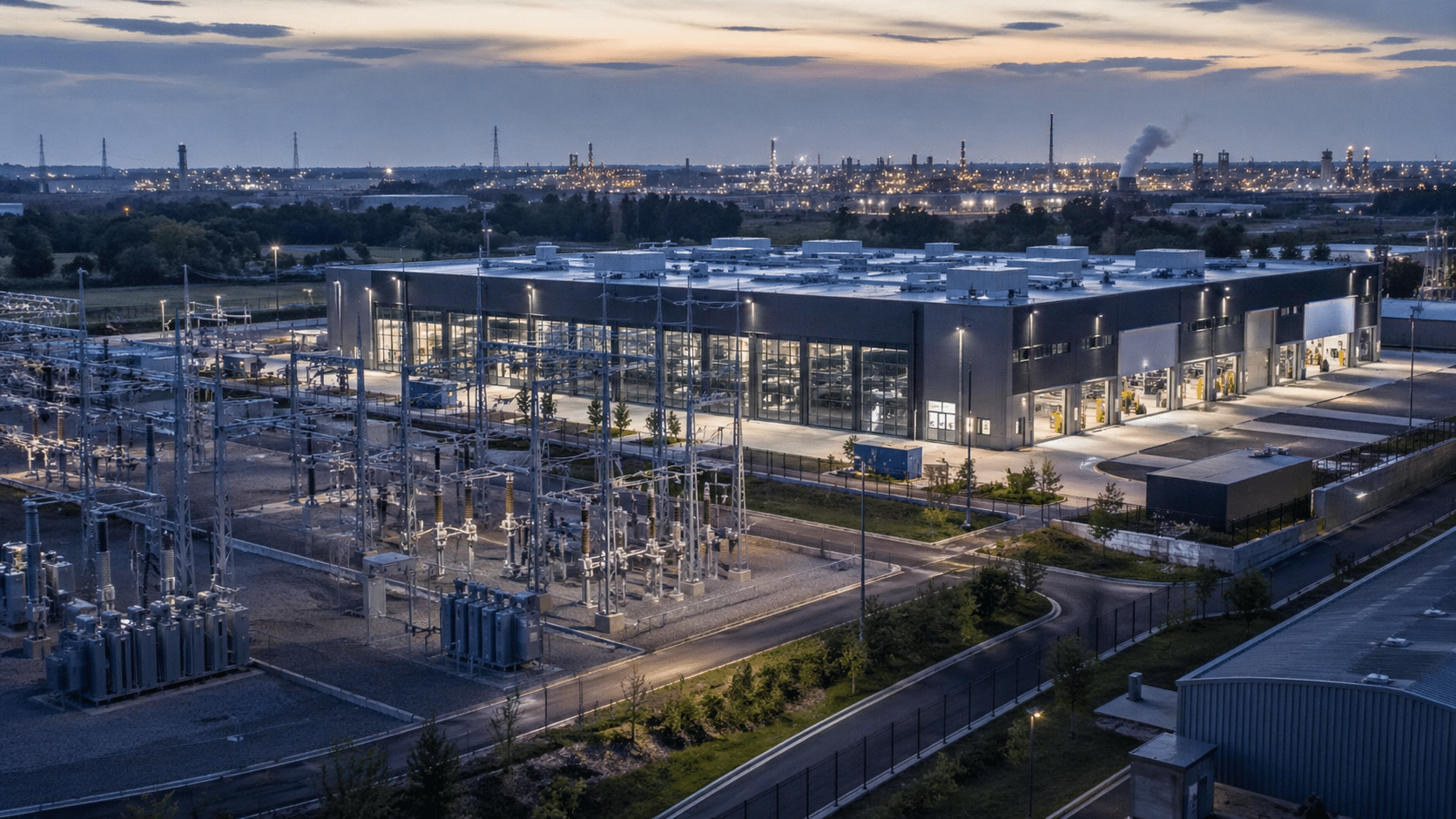

5. Power-Forward Industrial Campus Anchor. Some repurposing conversations focus too much on the building and not enough on the electrical infrastructure underneath it. A former data center site with significant substation capacity and clean power delivery is a rare industrial asset. Advanced manufacturing, battery cell testing, materials science laboratories, and other power-intensive industries face genuine constraints finding sites with the right electrical profile. The building becomes the anchor of an industrial campus where the power infrastructure is the primary draw — something built for one era of technology that now serves the next.

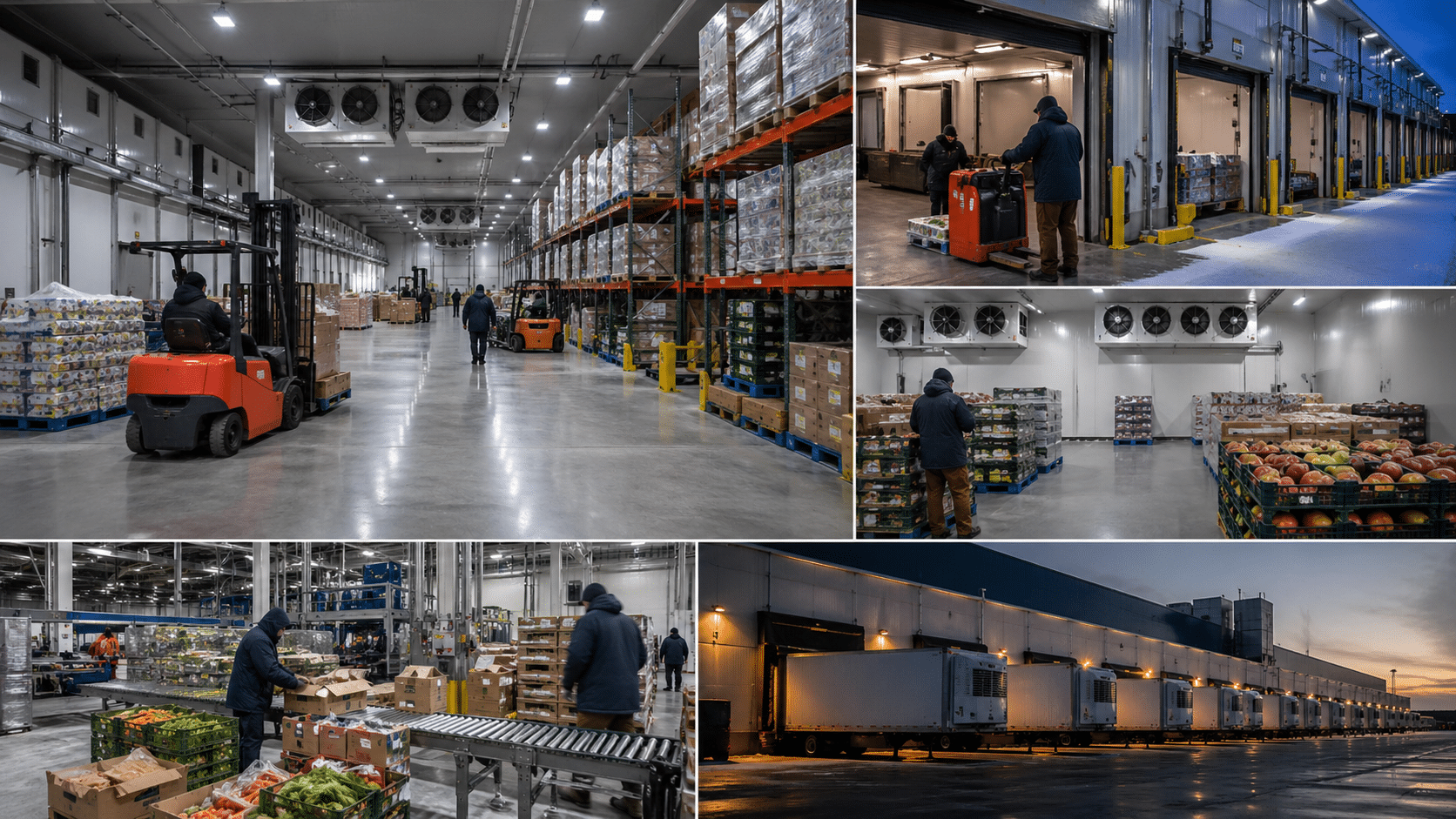

6. Cold Storage and Food Distribution. Cooling is the core competency of a data center. The mechanical systems, the insulated construction, the redundant power — all of it translates directly into cold storage infrastructure. A multi-zone cold storage facility with refrigeration for produce, frozen food, pharmaceutical products, and other temperature-sensitive goods can be built into the existing shell with far less new construction than a greenfield development would require. Add distribution lanes, loading dock configurations, and fleet staging areas and you have a regional logistics hub that serves the supply chain needs of the surrounding area.

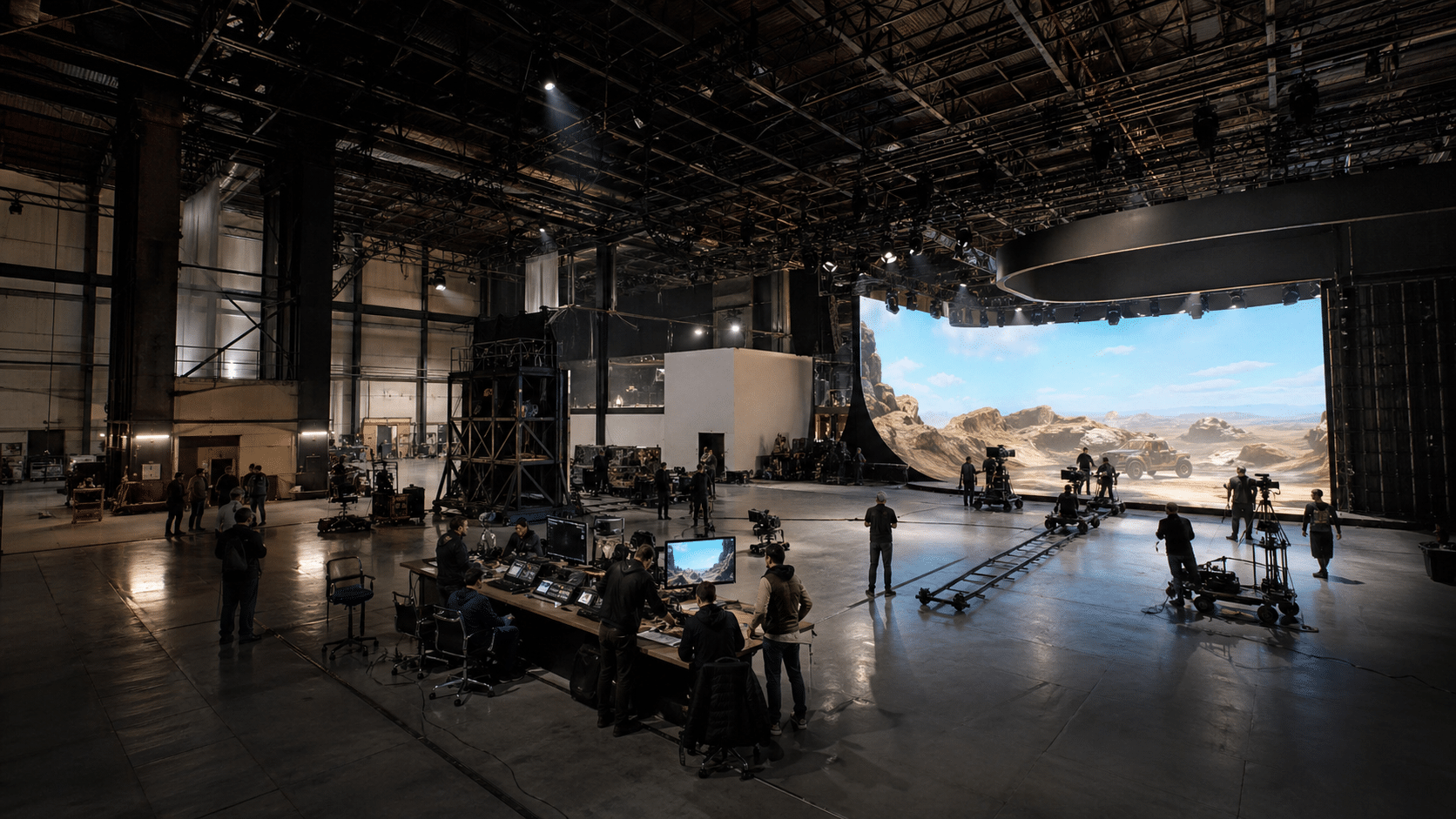

7. Film and Virtual Production Studio Complex. Windowless is a feature, not a bug, for film and television production. Sound stages require exactly what a data center provides: sealed environments with no external light intrusion, high ceiling clearances, durable industrial floors, and robust power for lighting rigs and production equipment. The LED volume production technique — used increasingly for virtual backgrounds and immersive environments — requires significant electrical capacity and high ceiling heights. Post-production suites, equipment storage, costume and prop warehousing, and production offices can all be fitted into the support spaces. For regions that haven’t historically had production infrastructure, this represents a genuine economic development opportunity.

8. Secure Research Facility Shell. Where zoning, regulatory environment, and community context allow, the security architecture and redundancy of a former data center can support sensitive research and development operations. The controlled access zones, monitored perimeters, and robust power are assets for research that requires physical security — whether that’s materials science, pharmaceutical development, advanced energy research, or government-adjacent R&D. The shell is already built to a standard that would cost significantly more to replicate from scratch.

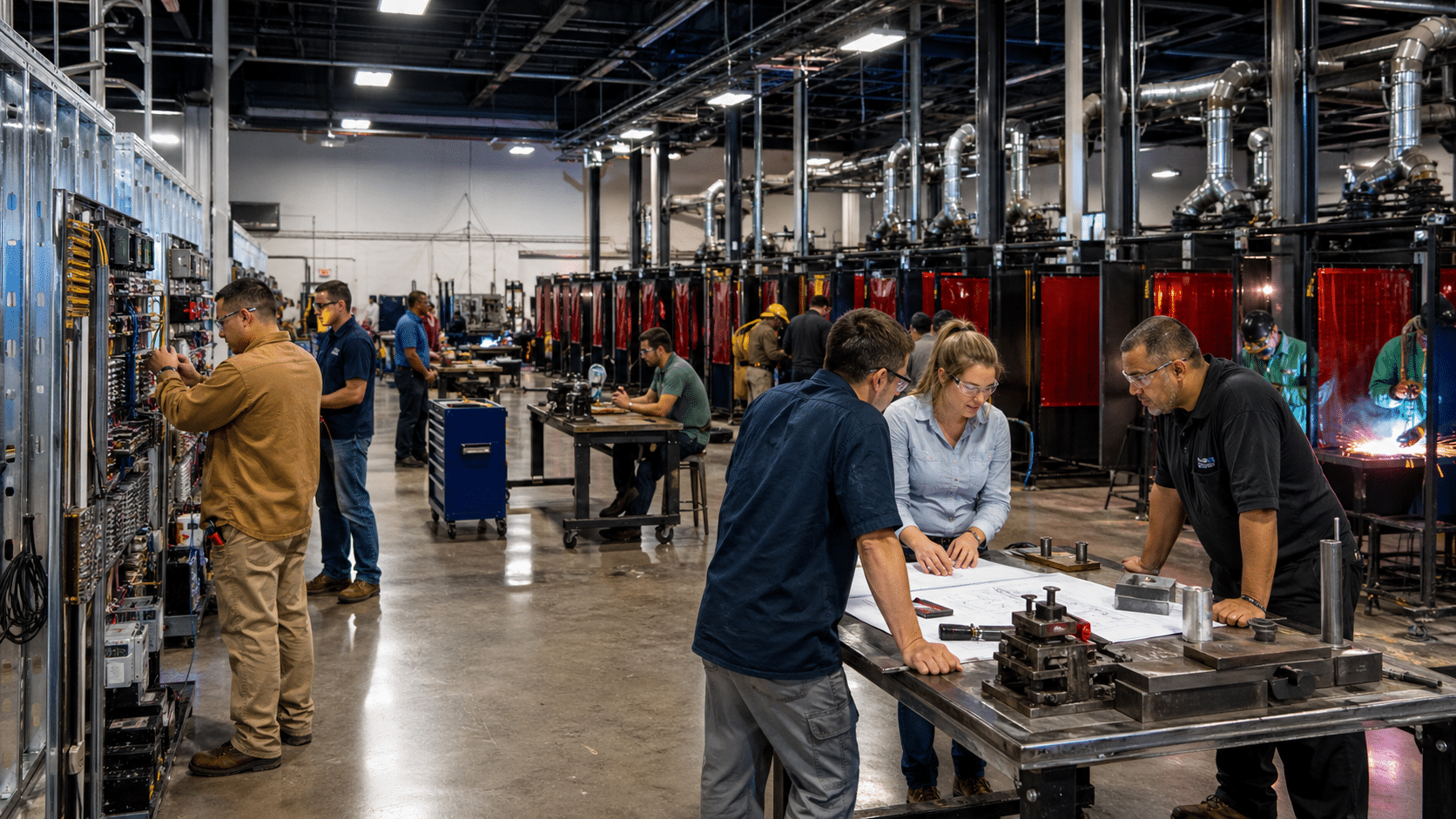

9. Workforce Training and Trade Campus. The need for skilled trade workers — electricians, HVAC technicians, welders, machinists, network engineers — is one of the most consistent labour market challenges in the regions that tend to host data centers. A repurposed facility can house hands-on training programmes, simulation environments, and dorm-style accommodation for students who travel for multi-week intensive courses. The building already has the mechanical and electrical complexity to serve as a live training environment. Job pipelines can be structured with local employers, creating a facility that actively develops the workforce the region needs rather than importing it.

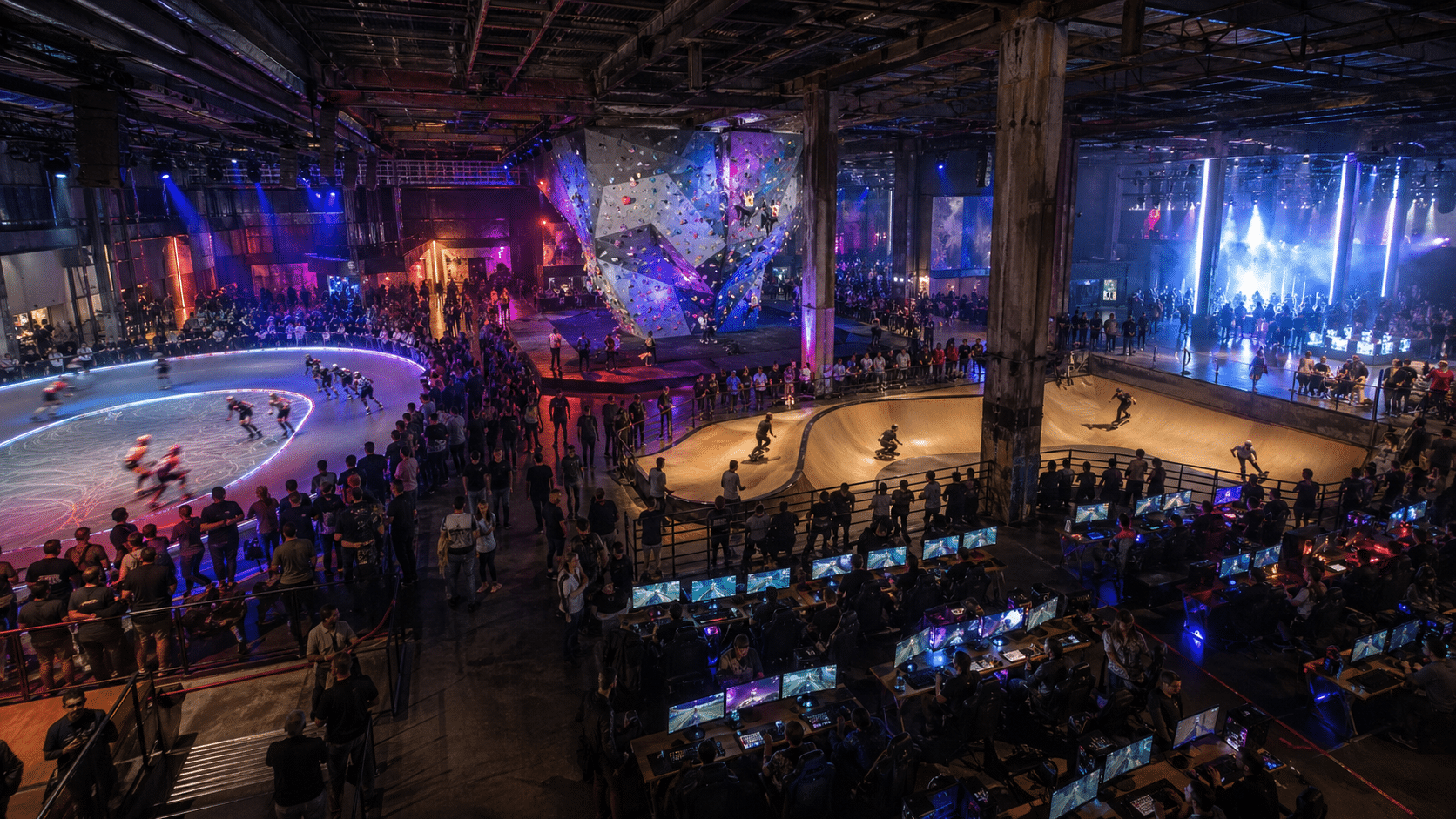

10. Industrial Entertainment Venue. The scale and industrial character of these buildings lend themselves to experience-based entertainment that conventional venues can’t accommodate. Roller derby, indoor climbing, skateboarding and BMX facilities, esports LAN event spaces, trade conventions, immersive art installations, and large-scale live events all benefit from the combination of clear-span floor plates, high ceilings, and robust power. Rather than competing with traditional entertainment venues in city centres, an industrial entertainment complex becomes a regional destination in its own right — particularly in areas where the alternative is a two-hour drive for anything at scale.

The Real Takeaway for AI Data Centers and the Communities That Host Them

Build the Boom With an Exit Plan

If communities are going to host these facilities, the conversation shouldn’t end at “jobs and taxes.” The smarter conversation is: what happens if the demand curve changes? Because it will. Technology doesn’t hold still, and the history of large-scale infrastructure investment is littered with facilities that outlasted their original purpose by decades.

Data centers should come with a future-use plan the same way stadiums and shopping malls should have come with one. Modular design, adaptive reuse options, and community benefit agreements aren’t anti-growth positions — they’re what responsible development looks like when the technology evolves faster than concrete. The counties being asked to absorb the footprint, the power load, and the water draw deserve more than a ribbon-cutting and a promise. They deserve a plan for the day after the boom.

If we’re honest, the AI revolution isn’t just a software story — it’s a land, power, water, and politics story. And the counties being asked to host these mega facilities deserve more than promises and press releases. They deserve contracts, transparency, and a realistic plan B for the day the boom cools off.

If you’re a city leader, developer, or business owner trying to make sense of what’s coming next, now is the moment to ask better questions — before the buildings are everywhere and the options are limited.